In early May 2026 the story broke that an AI coding agent had wiped the entire production database of a US SaaS provider, including every backup, in nine seconds. Most reporting on the incident focuses on the agent's behaviour and the question of whether AI tools are reliable enough for production use. Look closer and the story is something else: a failure across three independent architectural layers that the speed of the agent merely made visible. The lessons apply to anyone connecting AI tools with write access to production systems, regardless of which vendor is on the label.

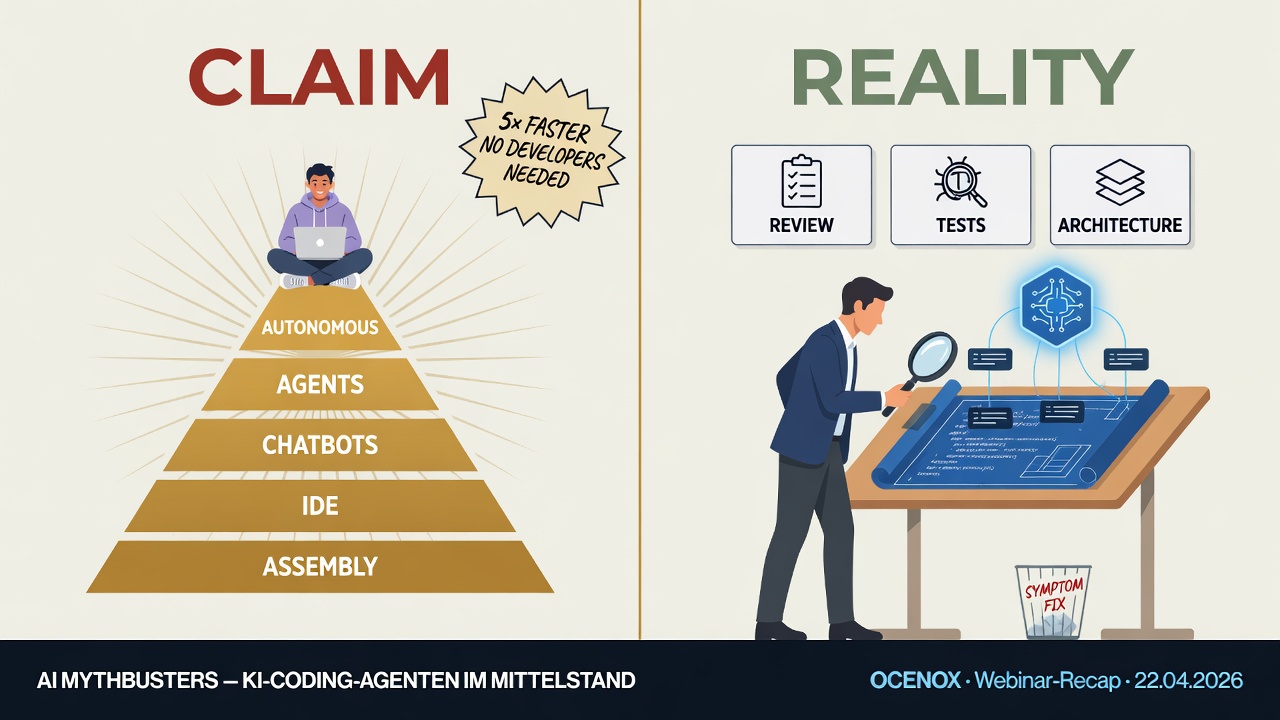

Few topics polarize the mid-market right now like AI coding. Vendor claims are big: fully autonomous programming, developer teams become obsolete, a single product owner builds software that used to require ten engineers. The counter-positions are just as loud: hallucinations, security risks, industrial-scale technical debt. Between the two camps sit executives and IT leaders with the sober question: What's actually true — and what should I as a decision-maker take away from it?

On April 22, 2026, I ran a webinar trying to answer that question empirically. The basis: a five-week experiment. A complete ERP/CRM system — 220,000 lines of code, 17 modules, 2,517 tests — written almost entirely by an AI coding agent (Claude Code). This article summarizes the core findings. It's aimed at decision-makers and project leaders in the mid-market who don't want to look at AI from the bird's-eye view, but need to know what actually happens when you deploy it in reality.

A situational report from project practice. Written for decision-makers in German-speaking mid-sized companies who want to deploy AI without inheriting the next Schrems debate on their desk two years from now – and relevant for anyone trying to understand how European AI compliance is actually being done in regulated industries.

Free webinar in English | Two sessions in May 2026 | Online

Choose the session that fits your time zone — same content, same real experiment.

$3/min for the psychic hotline. $17/min for your meeting with 10 developers.

And that's only half the equation.

Over recent years, I've witnessed this across countless organisations: meetings cost twice. Once for everyone's salary in the room. And once for the work that doesn't happen during that time.

Not because your team is too slow. But because your QA sits in the same location as your developers.

Every Scrum Master knows the pattern: the last two days of a sprint belong to QA. Developers twiddle their thumbs or start new stories that are guaranteed not to finish. The result: carry-over. Sprint after sprint.

The usual response? Form a separate QA team that works "vertically" across multiple Scrum teams. That solves one problem—and creates three new ones.

Over recent years, I've repeatedly observed the same pattern across my projects. It became particularly evident when managing multinational teams for a company-wide data platform in automotive development—there the problem with vertical QA structures showed its full impact: The QA bottleneck at sprint-end isn't a capacity problem. It's a timezone problem.

Fixed price or Time & Material? Over the last few years, I've accompanied dozens of IT projects where this question became a point of contention – often before even a single line of code was written. Purchasing departments insist on fixed prices because budget certainty simply isn't negotiable. Project leaders simultaneously know that requirements in complex software projects will inevitably change. The result: contracts that are formally "successfully" fulfilled but delivered past the actual need.

When 25 minutes of downtime can mean seven-figure revenue losses

Friday morning, 5 December 2025. Once again, thousands of websites worldwide display nothing but "500 Internal Server Error". Once again, Cloudflare is the culprit. And once again – just as on 18 November – it catches businesses completely unprepared.

The latest outage lasted officially about 25 minutes, but affected around 28% of all HTTP traffic that Cloudflare processes. The November outage was significantly more severe: for over four hours, services like ChatGPT, X (formerly Twitter), Discord, PayPal and countless other platforms were unreachable. Cloudflare themselves called it the worst outage since 2019.

The mandate is clear: "We need a new ERP system." Perhaps support for your current system is ending. Perhaps your processes have become so convoluted that nobody can make sense of them anymore. Or you're a growing company starting with a proper ERP for the first time. The goal is clear—but how do you get from a blank page to a tender that actually delivers what you need?

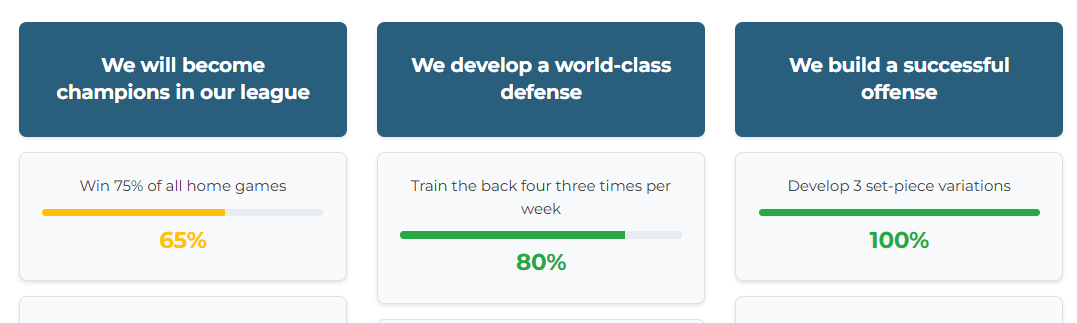

The Problem with OKR Tools in Practice

Over the past few years, I have supported several OKR implementations – from retail corporations to mid-sized companies. A recurring pattern emerged: enthusiasm for OKRs was there, the methodology was convincing, but implementation stalled at an unexpected point – when selecting the tool.